With Oracle donating OpenOffice.org trademark and code to the Apache Foundation, one point frequently made is the one about licensing differences. LibreOffice is under a weak copyleft license, that is, changes to existing core need to be made public (at the time a product ships). In contrast, to-be-Apache OpenOffice would be available under a non-copyleft license, meaning nobody is required to contribute anything back.

It is said that non-copyleft, or permissive, licenses are more popular with corporations, because they allow for much more flexibility in what, and when, to contribute back. Overall, it is conjectured, the projects will still see enough open contributions from corporate participants, because private forks are not cost-effective.

Let’s now have a look at how all that applies to OpenOffice.org. There are a few things to know beforehand. First, the code represents almost 20 years of development, and is, in many places, a sedimentation of bugfixes over bugfixes. Which overall results in highly coupled and fragile code. Secondly, OOo has a mature component framework, API and extension mechanism, that makes it easy for third parties to innovate on top of the existing core.

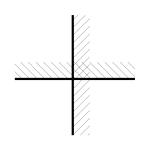

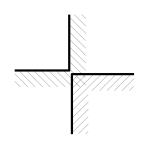

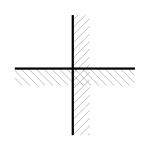

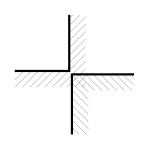

Given that, it is rather disadvantageous to keep changes to existing core code private, because of high internal maintenance costs (and a very non-linear relation between the size of the private change, and the risk to have it broken quite badly by merging new code from the upstream community). Conversely, it is highly advantageous to add more extension points to the core code, and reduce the internal coupling, since that enables later, independent functionality (that corporations could use to differentiate themselves).

So then, it seems the differences for the ecosystem between weak copyleft vs. permissive, in the case at hand, are negligible – for the former, responsible behaviour is enforced by the license, for the latter, by technical reality. Beyond existing core code, everyone is free to not publish changes either way. Of course, an Apache-licensed OpenOffice.org would permit taking the project all-proprietary at any given point in time, but such a move is clearly not in the interest of the community, and specifically not in the interest of the Apache Foundation.

Of the remaining differences, the constraints on e.g. the timing of contributing back, are simply too minor to justify the overhead of running two communities in parallel. That’s the main reason I oppose the idea – as a software engineer, I try hard to avoid duplication for no good reason.